Apple’s AR headset may help a user’s eyes adjust when putting it on

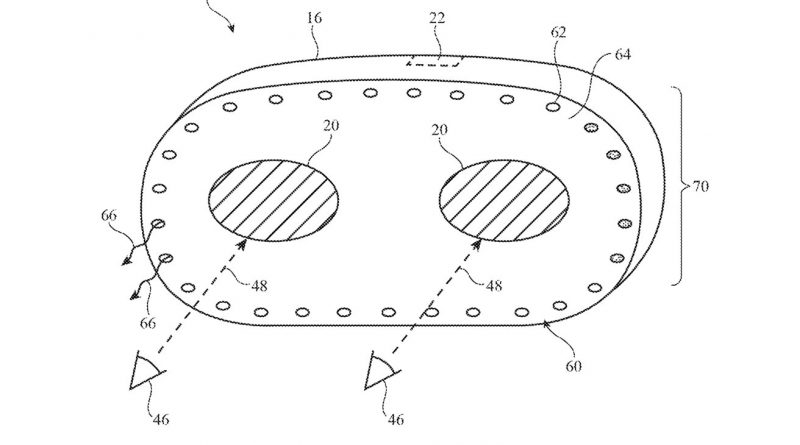

Apples service to this is successfully a lighting system for the within the headset, illuminating areas in the periphery of the users vision. Using ambient light sensors, the system can alter the brightness to more carefully match the light intensity of the environment, and possibly even the warmth or color of the light itself. This could involve a string of LEDs around the edge, it is likewise recommended the patent might use a light guide to divert light into the loop, which might reduce the number of LEDs needed for the system. Movement information from sensors on the headset could be used to change the brightness of the headset, such as to identify when a user is putting the headset on or removing it. Determining such an action can activate the system go through a lighting-up and dimming process. For example, the light loop might be illuminated when the headset finds the user is putting the headset on, matching the light inside the headset to the outdoors world. In time, the light loop can dim down to absolutely nothing, gradually permitting the wearers eyes to adjust. If the headset thinks it is being gotten rid of, it can gradually brighten the loop, so a users eyes have a chance to start adjusting prior to they have to deal with the lit environment. Filed on September 4, 2019, the patent is created by Cheng Chen, Nicolas P. Bonnier, Graham B. Myhre, and Jiaying Wu. Scene electronic camera retargeting

The 2nd patent, “Scene electronic camera retargeting,” handles a problem relating to computer vision, and how it connects to that of the user. Generally increased truth depends on rendering a digital possession in relation to a view of the environment, then either overlaying that on a video feed from a video camera and presenting it to the user, or overlaying it over a transparent panel that the user browses to see the real life. While the methods at play make good sense, there are still issues that platform developers have to get rid of. In the case of this patent, Apple believes there might be a mismatch in between what an electronic camera observing the scene may see, and what the user observes. This takes place due to the externally-facing cams being positioned even more forward and higher than the users eyes. This is a totally various viewpoint, and rendering output based on this different view will not always offer the illusion to the user that the virtual things remains in their real-world vision due to its positioning.

The patent handles how a headset electronic cameras perspective might not match to a users eyes.

In short, Apples patent suggests that the headset might take the camera view of the environment, change it to better match the view from the users eyes, then use that changed image to render the digital object into the real-world scene much better. Because a users head moves, and that it takes time for a headset to render a scene things, there may be a little amount of lag in the translation process to create the altered perspective image for rendering. By using the accelerometer, this might likewise allow the translation matrix to be additional altered to account where it expects the users viewpoint will be a brief time in the future.

Apples increased truth headset might instantly account for the difference in between a users point of view and a connected cam, while peripheral lighting might make it easier for a users eyes to change to using an AR or VR headset. Apple is reported to be working on its own augmented reality headset as well as smart glasses, presently speculated to be called “Apple Glass,” which might likewise use AR as a method to show info to the user.

The very first patent, an “Electronic device with adaptive lighting system,” deals with the lesser-considered issue of a users vision at the time of donning or eliminating a VR or AR headset. Apple reckons that headset users may encounter the built-in display screen to be too dark or washed out when the user first puts on the headset.

Apples enhanced reality headset might instantly account for the distinction between a users point of view and a connected cam, while peripheral lighting could make it simpler for a users eyes to change to wearing an AR or VR headset. Apple reckons that headset users might come across the built-in screen to be too dark or washed out when the user first puts on the headset. Motion information from sensing units on the headset might be used to change the brightness of the headset, such as to spot when a user is putting the headset on or removing it. The light loop could be lit up when the headset detects the user is putting the headset on, matching the light inside the headset to the outdoors world. Generally increased truth relies on rendering a digital property in relation to a view of the environment, then either overlaying that on a video feed from a camera and presenting it to the user, or overlaying it over a transparent panel that the user looks through to see the genuine world.