Computer vision in AI: The data needed to succeed

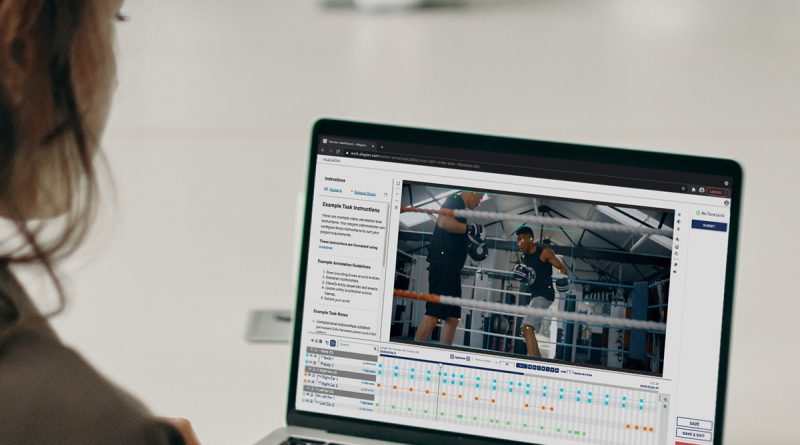

Developing the capability to annotate enormous volumes of data while preserving quality is a function of the design development lifecycle that business often ignore. Its resource intensive and needs customized knowledge. At the heart of any successful device learning/artificial intelligence (ML/AI) effort is a dedication to high-quality training information and a pathway to quality data that is shown and well-defined. Without this quality information pipeline, the effort is doomed to stop working. Computer vision or data science groups typically rely on external partners to establish their information training pipeline, and these partnerships drive design performance. There is nobody definition of quality: “quality information” is completely contingent on the specific computer vision or machine knowing task. However, there is a basic process all groups can follow when working with an external partner, and this path to quality information can be broken down into four prioritized phases. Annotation criteria and quality requirements Training data quality is an examination of an information sets physical fitness to serve its function in a given ML/AI use case. The computer system vision group requires to develop an unambiguous set of rules that explain what quality means in the context of their job. Annotation requirements are the collection of guidelines that specify which challenge annotate, how to annotate them properly, and what the quality targets are. Precision or quality targets specify the lowest appropriate outcome for assessment metrics like accuracy, recall, precision, F1 rating, et cetera. Normally, a computer vision group will have quality targets for how precisely objects of interest were classified, how precisely things were localized, and how precisely relationships in between things were determined. Workforce training and platform configuration Platform setup. Task design and workflow setup require time and knowledge, and accurate annotation requires task-specific tools. At this phase, data science groups require a partner with proficiency to help them determine how finest to set up labeling tools, category taxonomies, and annotation interfaces for precision and throughput. Employee testing and scoring. To precisely label data, annotators need a properly designed training curriculum so they completely comprehend the annotation criteria and domain context. The annotation platform or external partner need to ensure precision by actively tracking annotator proficiency versus gold data tasks or when a judgement is customized by a higher-skilled worker or admin. Ground reality or gold information. Ground truth information is essential at this phase of the process as the baseline to measure and score employees output quality. Many computer system vision groups are currently dealing with a ground reality data set. Sources of authority and quality control There is no one-size-fits-all quality assurance (QA) approach that will meet the quality standards of all ML use cases. Particular business goals, as well as the danger connected with an under-performing model, will drive quality requirements. Some projects reach target quality using numerous annotators. Others need complex evaluations versus ground truth information or escalation workflows with verification from a subject professional. There are two primary sources of authority that can be utilized to measure the quality of annotations which are used to score employees: gold information and professional evaluation. Gold data: The gold data or ground fact set of records can be utilized both as a credentials tool for testing and scoring employees at the outset of the procedure and likewise as the measure for output quality. When you utilize gold information to determine quality, you compare employee annotations to your expert annotations for the exact same information set, and the difference in between these two independent, blind answers can be used to produce quantitative measurements like accuracy, recall, f1, and accuracy ratings. Professional evaluation: This method of quality control depends on expert review from an extremely competent employee, an admin, or from a specialist on the consumer side, sometimes all three. It can be used in combination with gold data QA. The professional customer takes a look at the answer provided by the qualified employee and either approves it or makes corrections as required, producing a brand-new proper answer. At first, a specialist review might take place for every single circumstances of labeled information, however in time, as worker quality improves, skilled review can use random tasting for ongoing quality control. Iterating on information success Once a computer system vision team has successfully released a high quality training data pipeline, it can accelerate progress to a production all set model. Through continuous support, optimization, and quality control, an external partner can assist them: Track speed: In order to scale successfully, its excellent to determine annotation throughput. How long is it taking data to move through the process? Is the process getting faster?Tune employee training: As the project scales, labeling and quality requirements may develop. This demands continuous labor force training and scoring.Train on edge cases: Over time, training data must consist of more and more edge cases in order to make your model as robust and precise as possible. Without high-quality training data, even the very best moneyed, most ambitious ML/AI projects can not be successful. Computer vision groups require platforms and partners they can rely on to deliver the information quality they need and to power life-altering ML/AI designs for the world. Alegion is the tested partner to develop the training data pipeline that will fuel your design throughout its lifecycle. Contact Alegion at solutions@alegion.com. This material was produced by Alegion. It was not composed by MIT Technology Reviews editorial personnel.

At the heart of any effective maker learning/artificial intelligence (ML/AI) initiative is a commitment to premium training information and a pathway to quality information that is shown and distinct. Annotation requirements and quality requirements Training information quality is an assessment of a data sets fitness to serve its purpose in an offered ML/AI usage case. Gold data: The gold data or ground truth set of records can be used both as a qualification tool for testing and scoring workers at the outset of the procedure and also as the measure for output quality. When you use gold information to determine quality, you compare worker annotations to your professional annotations for the same data set, and the distinction between these 2 independent, blind answers can be utilized to produce quantitative measurements like accuracy, f1, accuracy, and recall ratings. Repeating on data success Once a computer system vision group has actually successfully introduced a high quality training data pipeline, it can accelerate development to a production all set model.